Frameworks require SEO‑sensitive structure Step audits are on rendering, routing and CWV then apply SSR/ISR and internal linking patterns that scale without risking indexation.

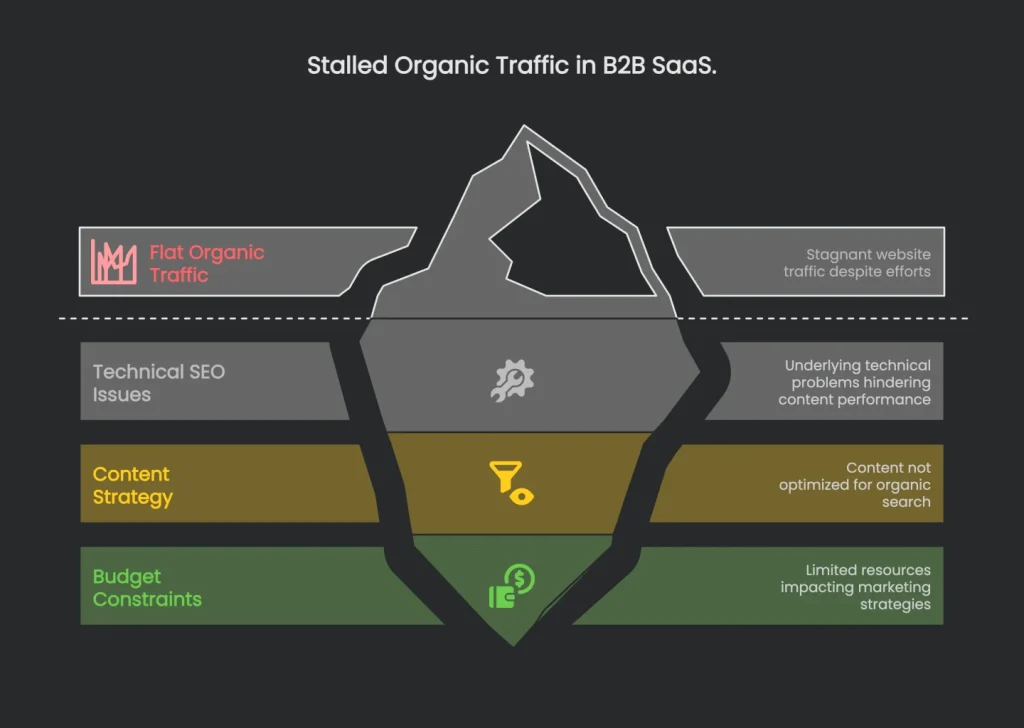

The Challenge: When Good Content Fails

High-Stakes Context

In the cramped online space for B2B SaaS, a killer dashboard is only half the battle. Prospects still have to find it and decide to stay. That was the stickiest puzzle for a project management software client. Their product shimmered with smart features. The marketing team shipped gem-quality content—blogs, build stories, feature spotlights, and step-by-step guides. However, the team set a strict budget: stop paying for pricey ads that looked wise short term but hurt later. They wanted a self-driving growth engine that produced qualified, 100% organic leads.

Why Organic Traffic Matters

In a world of five-month buyer journeys and several decision-makers testing the free trial, clear ROI rules. Therefore, a consistent flow of organic traffic is not just a pretty chart. For SaaS, it is the lever that pulls down Customer Acquisition Cost (CAC) and smooths revenue.

Flat Results Despite Effort

Even though the team worked overtime, they hit a wall. Organic traffic was flat, and demo requests from search stopped moving. They produced more guides, videos, and podcasts. However, instead of sky-high bars, the numbers barely changed. “Is SEO still worth our energy?” they asked at the weekly stand-up.

The Hidden Culprit

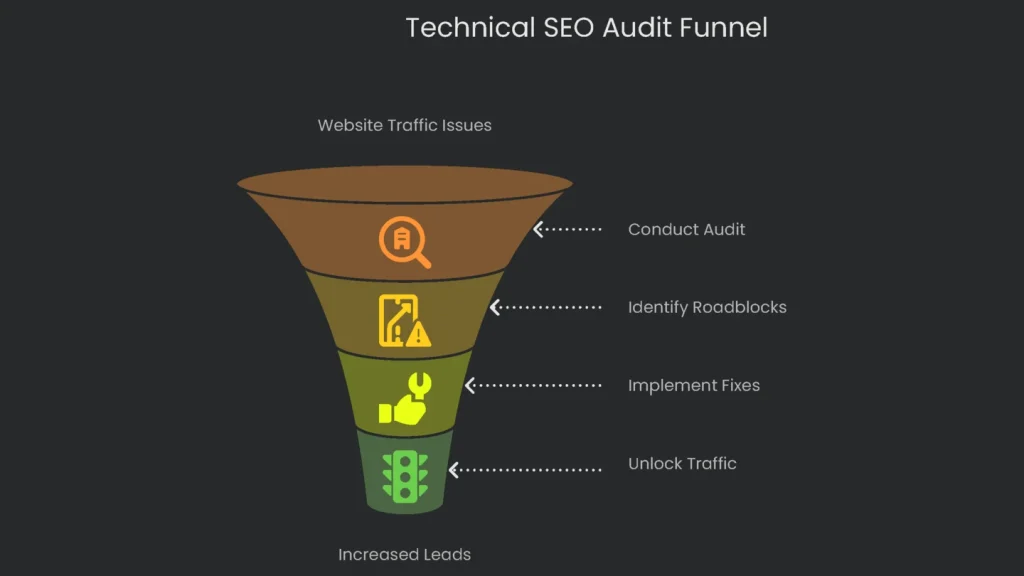

This kind of stall is common. Many B2B SaaS companies assume they need “just one more article.” However, the real villain often hides in technical issues. Pouring more content onto a site with broken foundations makes the problem worse. It is like fixing a flat tire with air but never patching the hole. The stories, SEO dreams, and traffic peaks were ready. They just needed freedom from invisible walls. So the team called us to find the padlocks and ask, “Why is great content still stuck at the starting gate?”

Uncovering the Bottlenecks

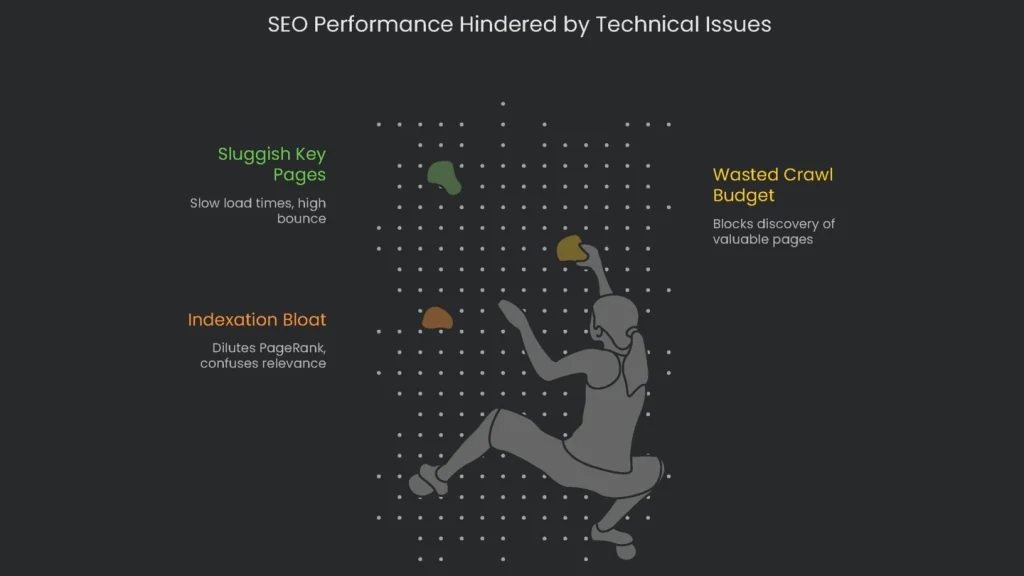

Wins started on the first call with the first diagnosis. The SEO puzzle begins with tiny details few want to dig through. Therefore, we dove into gigabytes of raw data rather than summaries. Dashboards tell fine stories. However, the strongest plotlines appear only under analysis. So, we ran a full technical audit that mimes Googlebot’s crawl. Screaming Frog scanned page clusters. A log file analyzer replayed server requests. PageSpeed exposed timing stats. As a result, a single, uncut view of the site’s DNA emerged. Three thorny bottlenecks snapped into focus.

Finding 1: Indexation Bloat

The crawl and the “site:” command told a clear story. There were ~15,000 indexed URLs, yet only ~2,000 were true assets—product pages, solutions, and crafted blog entries. Tracing back, we found more than 7,000 look-alike pages. Help-document snippets and knee-jerk search filters had created thin or near-duplicate URLs. Consequently, indexed clutter diluted incremental PageRank and bleached pages that mattered. Worse, duplication confused relevance and triggered keyword cannibalization. In one case, help-desk pages spun endless URL variations, and those variations bled into product detail pages.

Finding 2: Wasted Crawl Budget

The bigger worry was whether those endless URLs blocked discovery of high-value pages. So we pulled server logs—the raw, timestamped record of every request. We replayed Googlebot’s visits to see where time drained away. The picture was stark. Googlebot munched through budget on bloated, low-value areas and missed shiny new content. Think of a crawl budget as a small timer. It limits how long the bot crawls your site. In this case, the timer burned on hundreds of client-side help-desk URLs. As a result, key product updates and rich posts took weeks to enter the index. The team’s frustration now made sense. It was not a demand problem. Google had not seen the new posts at all.

Finding 3: Sluggish Key Pages

PageSpeed Insights revealed a third problem that hurt users and sales. “Request a Demo” and pricing pages each had Largest Contentful Paint (LCP) over four seconds. LCP measures how long the main content on the page to load. Times past four seconds are “Poor” and feel awful. If a lead-gen page loads like molasses, visitors bail. For B2B shoppers, the first impression becomes, “This product might also run slow.” Research backs this up: slow load times lift bounce rates. Here, render-blocking JavaScript and oversized images were the culprits.

These challenges tangled like a bike chain and braked growth. Index bloat created thin pages that soaked up the crawl limit and hid value. When a visitor reached a crucial page, poor LCP made it crawl, so they bounced. Consequently, user signals dipped and rankings slid. Therefore, breaking the cycle became the next step.

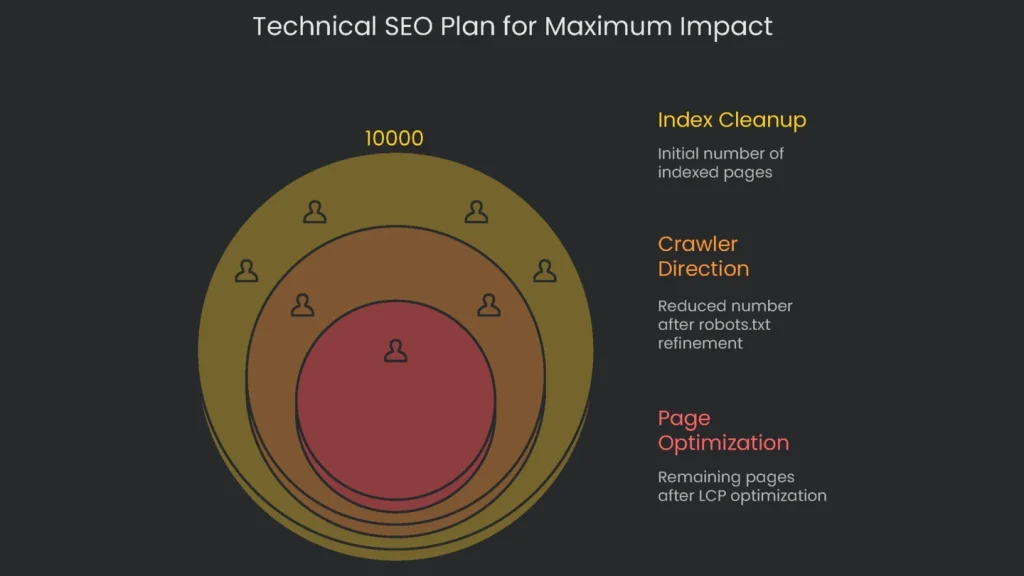

The Plan: SEO Strategy That Moves the Needle

Overview

A winning technical SEO plan is not random fixes. It is a designed blueprint for maximum business impact. We tackled the biggest problems first to lay a rock-solid base. With this client, the plan rolled out in three phases. Each phase built on the last for steady, compounding progress.

Phase 1: Cleaning Up the Index

Our first move stopped Google from scoring the site on junk. Why polish a site that still shows empty event pages and widget views? To trim the pile and keep authority healthy, we used two techniques: rel=canonical links and the noindex tag.

- The Action: We mapped duplicate clusters. For each group, we chose a hero page and added a rel=canonical to the clones. That tag signals, “Send the credit to this URL.” For one-off help-desk articles few link to and filtered search pages with no lasting value, we used noindex. It tells Google, “Keep this out of the index.”

- Why We Did It: Cutting index bloat strengthens the set. Canonical tags guided duplicates to a single authority. Noindex removed pages nobody needed. As a result, equity stopped leaking and flowed to revenue pages.

Phase 2: Directing the Crawlers

With a cleaner index, we focused Googlebot’s time on high-value URLs.

- What We Did: We refined robots.txt to keep low-value areas off the crawl path. By adding a directive like:

Disallow: /help/articles?*sort=

we stopped crawling of parameterized, filtered lists. Now Google scans pages that matter to users and to revenue.

- Why We Did It: This directs traffic so Googlebot knows where to go. It saves crawl budget faster than sprinkling noindex tags. A noindex page must still be fetched to read the tag. Robots.txt blocks the fetch entirely. Consequently, saved budget shifts to product, solutions, and fresh posts. Discovery speeds up, and visibility rises.

Phase 3: Making It Worthwhile

Once Google focused on priority pages, we made those pages irresistible. The goal was a quick, smooth experience that nudges visitors to convert.

- What We Did: We partnered with developers for two upgrades to improve LCP. First, we delayed non-critical scripts on key landing pages until after the main content rendered. Next, we optimized large images, then converted them to WebP format for lean, crisp files.

For step-by-step help, see this guide for faster pages.

- Why We Did It: The target was a “Good” LCP. By deferring scripts and tightening visuals, LCP dropped from 4+ seconds to well under 2.5 seconds. As a result, new visitors landed on fast pages, and conversion odds rose. Mission accomplished!

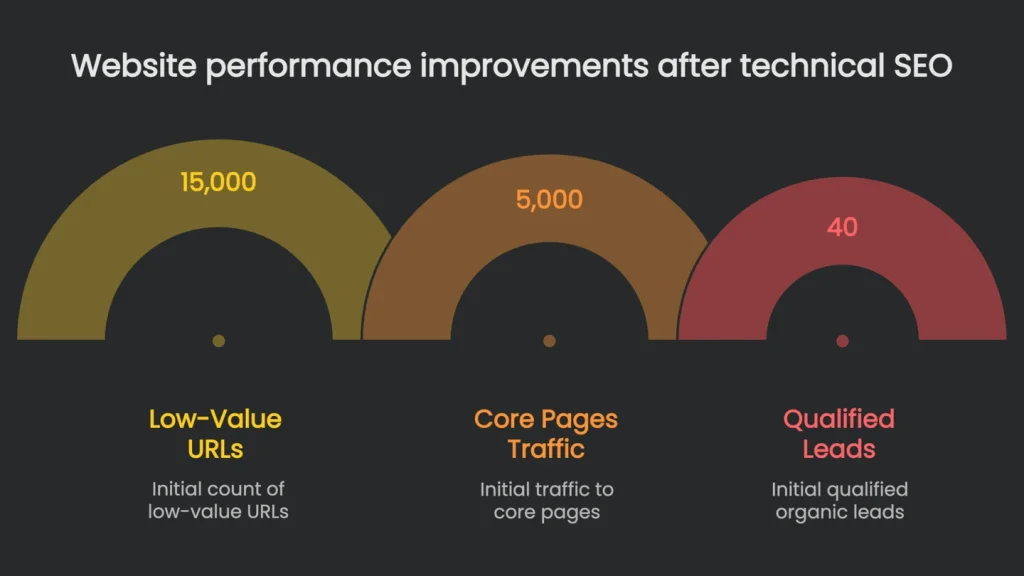

The Results: From Fixes to Growth

The real proof is profit. Therefore, here is how technical fixes translated to growth. Benefits arrived in waves. We started with tech wins and rode the momentum into sustainable business impact.

First 3 Months: Technical Health

In three months, the cleanup showed fast gains. The Health Score in Ahrefs Site Audit jumped 45 points, from 42 to 87. This spike came from fixing thousands of duplicate content issues, broken links, and crawl failures.

Google Search Console echoed the shift. In the “Pages” report, indexed low-value URLs fell from ~15,000 to under 1,000. Consequently, Google focused on the good stuff.

First 6 Months: Traffic and Rankings

As Googlebot cruised the polished site, visibility and visits multiplied. Six months later, organic traffic to core product pages—those the sales team relied on—rose by 85%. This came from stronger rankings on relevant keywords. For example, “project management software for small teams” jumped from page three into the top five on page one. That is vital because the top position gets about 27.6% of clicks, while pages two and three get very little.

The Big Picture: More Business

The ultimate win was leads. Relevant visitors saw better UX and streamlined landing pages. Consequently, those pages became a conversion machine. Demo requests from organic users rose 110%. It was not only more eyeballs. It was the right eyeballs on pages ready to close.

- The surge of solid leads let the client cut pay-per-click spend. CAC dropped, proving that a well-built technical SEO backbone pays dividends over time.

The table below sums up how a clunky site became a lead generator within six months.

| Metric | Baseline (Before) | Result (6 Months After) | Improvement |

| Ahrefs Health Score | 42 | 87 | +45 points |

| Indexed Low-Value URLs (GSC) | ~15,000 | < 1,000 | -93% |

| Avg. LCP on Key Pages | 4.2 seconds | 1.8 seconds | -57% |

| Organic Traffic to Core Pages | 5,000 / month | 9,250 / month | +85% |

| Qualified Organic Leads | 40 / month | 84 / month | +110% |

The data reads like a Cinderella story for URLs. By shoring up the back end—raising the Ahrefs score and booting bloated URLs—we welcomed search engines and humans alike. Consequently, pages loaded faster, traffic grew, and high-quality leads followed.

Conclusion: Let Technical SEO Keep the Engine Running

Key Takeaway

Technical SEO edits are not chores. They are the concrete slab every strategy sits on. For this B2B software firm, a site in peak technical shape let its blog, case studies, and guides finally shine. As a result, a flat channel became a reliable source of deals.

Spot the Signs

When promotions drive traffic but leads stay slow—even with strong topics and distribution—something buried is off. Bloated indexes, wasted crawl budget, and shaky on-page experiences create potholes no infographic can bridge. After a surgical audit and smart repairs, we repaved the highway for both Google and real visitors.

Compounding Returns

In the end, investing in technical SEO pays again and again. Once the under-the-hood work lands, every new post and refreshed landing page performs better. Traffic grows. More importantly, the right traffic arrives, fills forms, books demos, and keeps the curve moving up.

Unlock Your Growth Potential

Get a Technical SEO Audit

Frustrated that your killer blog series is not lifting organic visitors? More than likely, a hidden technical roadblock sits between your page and the people who need it. Crawlers may miss important keywords, or your mobile layout may confuse bots. Therefore, no amount of excellent writing will break through until you fix the basics. Have our B2B SaaS SEO pros pinpoint the issues keeping your site from growing. You can click here to check out the success stories, then let’s start unlocking hidden traffic and leads. You may be one fix away from a breakthrough.

Implementation steps

- Look at your rendering strategy to see if you’re using the right version (SSR, SSG, ISR) and whether all routes are accounted for by the framework.

- Gather baseline numbers on key tech performance (indexability, Core Web Vitals) for your most important templates.

- Implement SSR or a hybrid solution and make sure internal routes and links are all set up properly.

- Cut down bundle sizes, control third-party scripts, and tag pages with structured data only when it’s useful.

- Track performance through logs, Google Search Console, and real-user monitoring, and refine it with regular dev sprint work

Frequently Asked Questions

What issues can frameworks create?

Client-side only rendering, complex routing, and big JavaScript bundles that slow Core Web Vitals.

How do you address them?

We run audits, help with server-side or incremental rendering, fix routing, and trim the bundle size.

Which tech stacks do you work with?

We support Next.js, Nuxt, Angular, React, Vue, and others.

How is success measured?

More pages showing in the index, faster Core Web Vitals, and a lift in qualified organic traffic.

Can your devs collaborate with ours?

Yes, we provide implementation support and quality assurance the whole way through.